AMD Unveils M1300X AI Chip to Challenge Nvidia’s Dominance

Advanced Micro Devices (AMD) disclosed new information about an artificial intelligence (AI) chip that could challenge market leader Nvidia on June 13.

The M1300X, AMD’s most advanced graphics processing unit (GPU) for artificial intelligence, will trickle out in the third quarter of 2023, with bulk production commencing in the fourth.

AMD’s announcement represents the most significant challenge to Nvidia, which presently controls over 80% of the market for AI chips.

GPUs are the processors that companies like OpenAI use to develop cutting-edge AI applications like ChatGPT.

They have parallel processing capabilities and are optimized for managing large amounts of data concurrently, making them well-suited for tasks requiring rapid and efficient graphic processing.

AMD declared that its most recent MI300X processor and CDNA architecture were designed to meet the requirements of significant languages and advanced AI models.

The M1300X accommodates even larger AI models than other processors, such as Nvidia’s H100 chip, which supports a maximum of 120 GB of memory.

AMD’s infinity architecture technology integrates eight M1300X accelerators into a single system, mirroring Nvidia and Google’s implementation of eight or more GPUs for artificial intelligence applications.

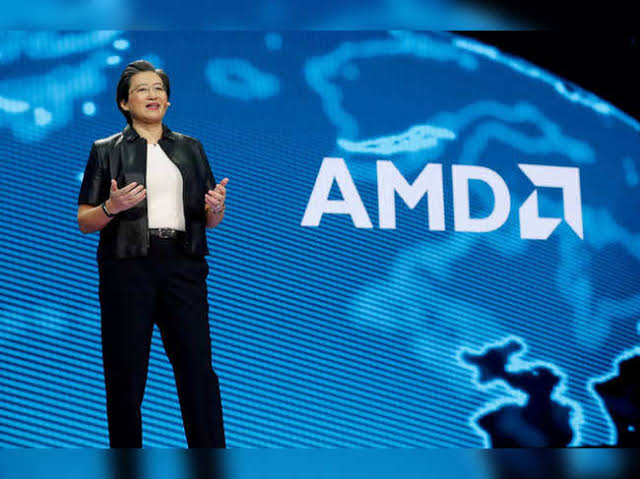

During a presentation to investors and analysts in San Francisco, Lisa Su, CEO of AMD, highlighted AI as the company’s “most significant and strategically important long-term growth opportunity.”

“We think about the data center AI accelerator [market] growing from something like $30 billion this year, at over 50% compound annual growth rate, to over $150 billion in 2027.“

If developers and server manufacturers employ AMD’s “accelerator” AI chips as alternatives to Nvidia’s products, the chipmaker could access a substantial untapped market.

AMD, renowned for its conventional computer processors, stands to gain from the prospective demand shift.

Although AMD did not disclose specific pricing information, this move could put downward price pressure on Nvidia’s GPUs, such as the H100, which can cost as much as $30,000 or more.

Reduced GPU costs can potentially reduce the entire cost of operating resource-intensive generative AI programs.